|

|

|

|

|

|

|

|

|

|

|

For this part, we trained a convolutional neural network (CNN) to group images from the Fashion MNIST dataset into 10 appropriate classes. The classes are numbered as follows:

|

|

|

|

|

|

|

|

|

|

|

The CNN we used had the following structure:

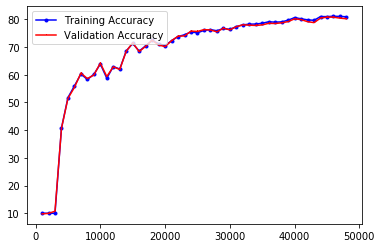

Now applying the CNN to the test set, here are the results of the test set, broken down between classes and averaged across all.

Test Accuracy of 0: T-shirt/top : 80 %

Test Accuracy of 1: Trouser : 92 %

Test Accuracy of 2: Pullover : 76 %

Test Accuracy of 3: Dress : 89 %

Test Accuracy of 4: Coat : 67 %

Test Accuracy of 5: Sandal : 93 %

Test Accuracy of 6: Shirt : 28 %

Test Accuracy of 7: Sneaker : 79 %

Test Accuracy of 8: Bag : 95 %

Test Accuracy of 9: Ankle boot : 98 %

Test Accuracy of the network on the test images: 80 %

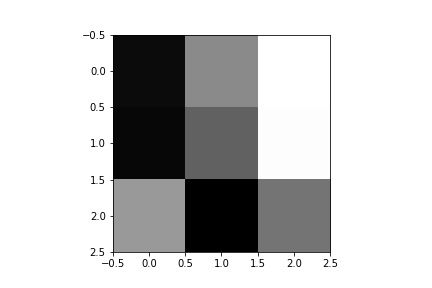

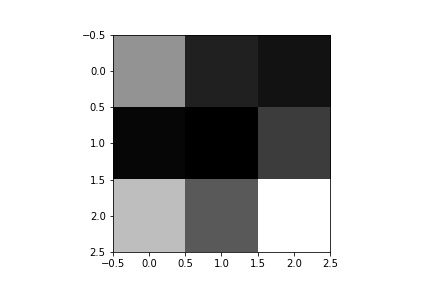

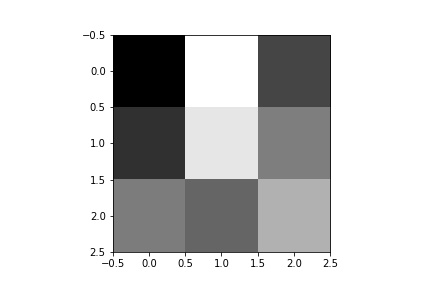

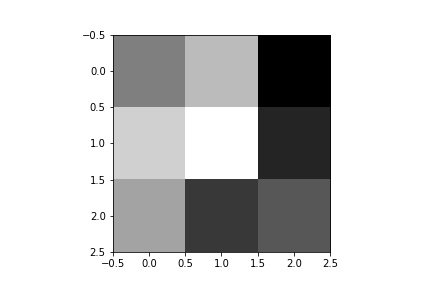

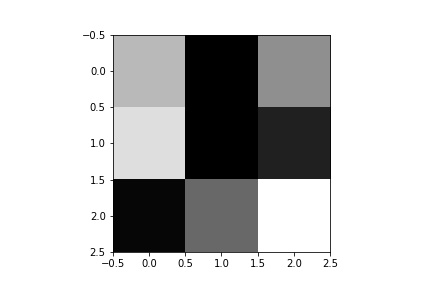

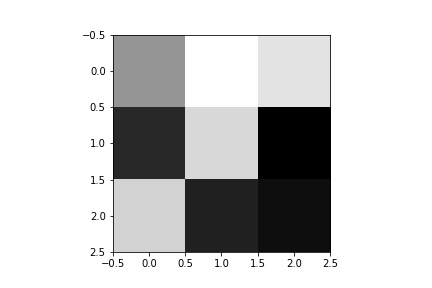

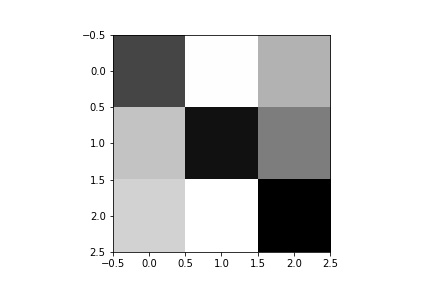

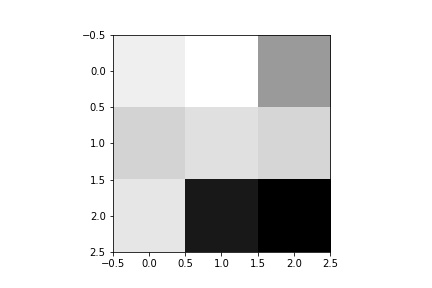

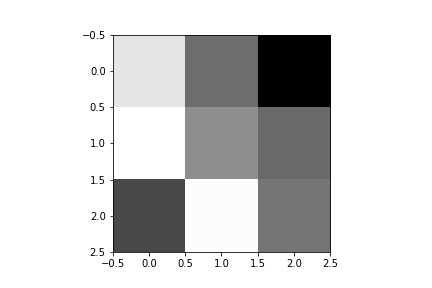

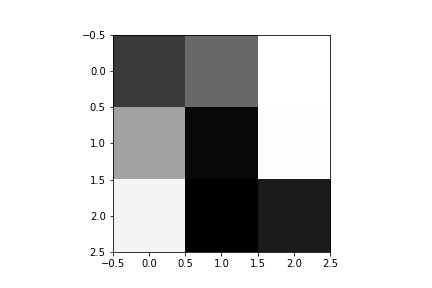

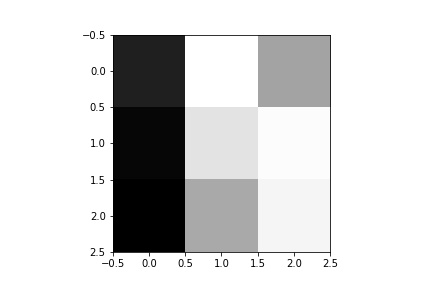

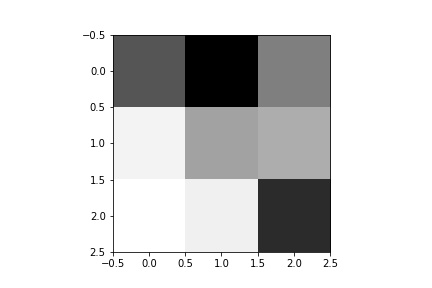

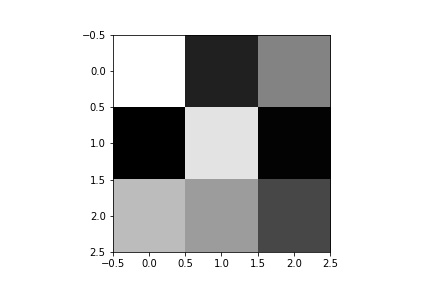

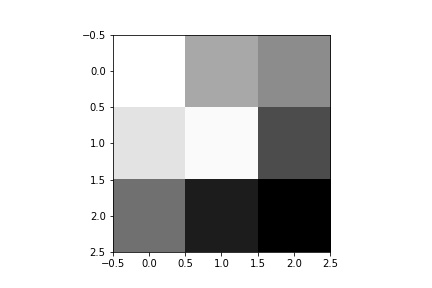

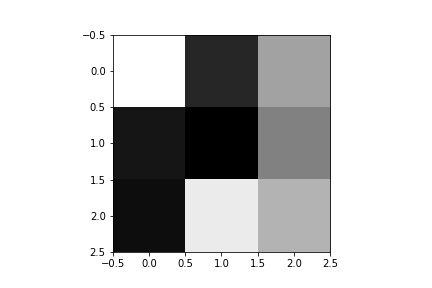

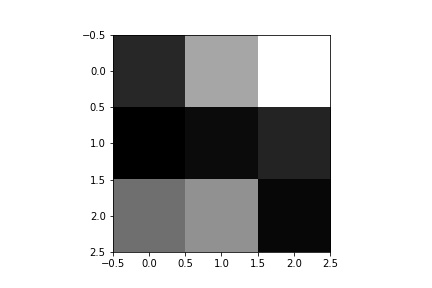

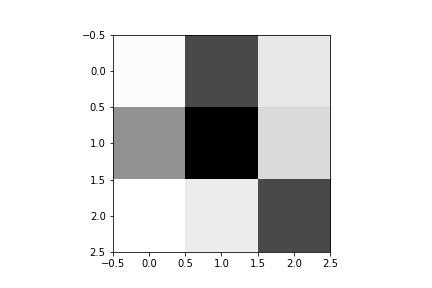

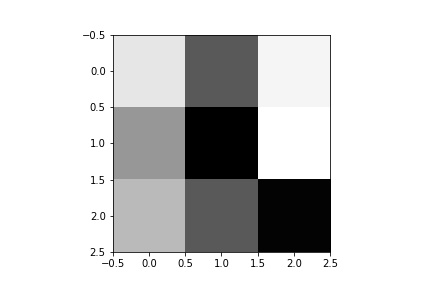

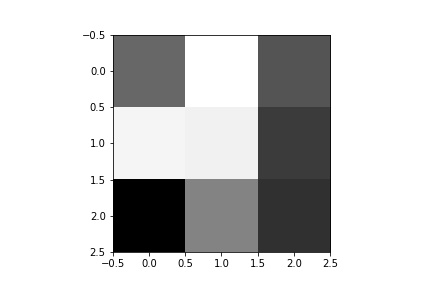

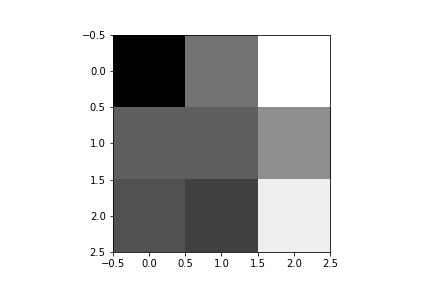

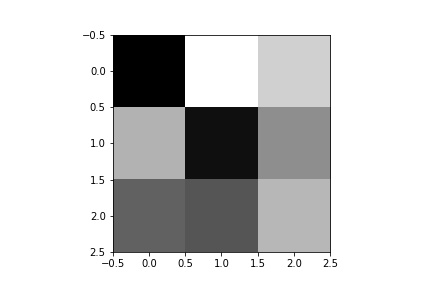

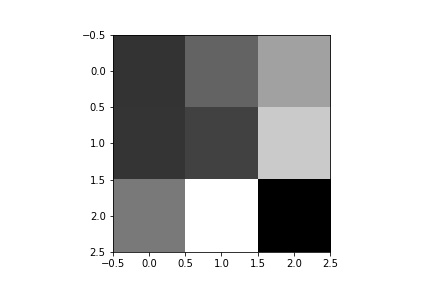

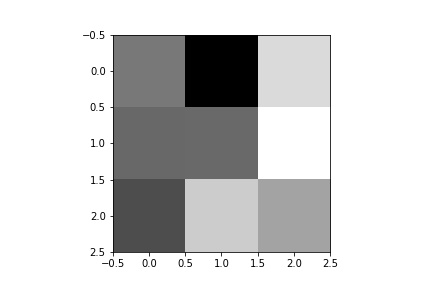

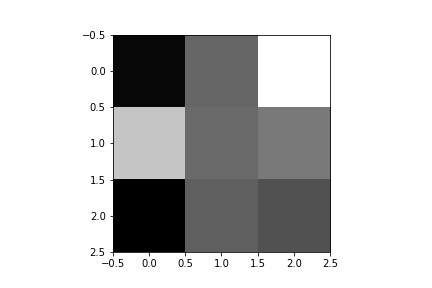

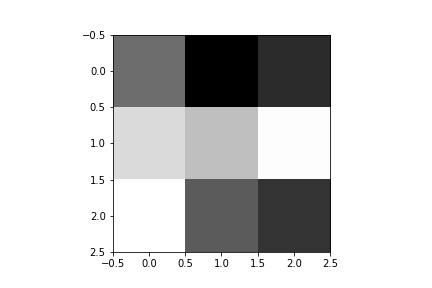

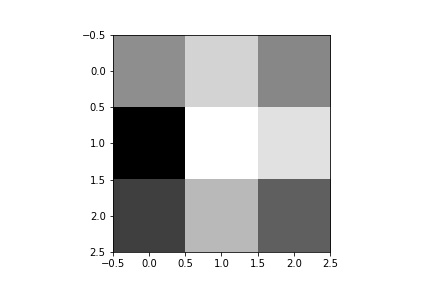

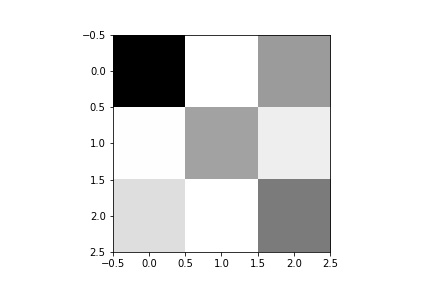

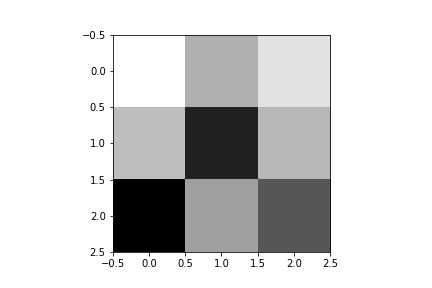

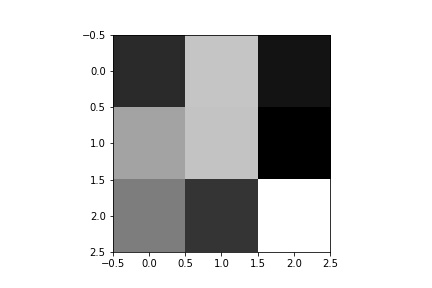

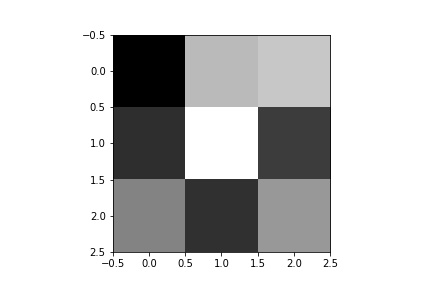

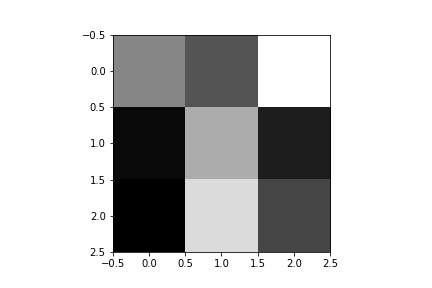

If we display the filters as images, this is what each channel of the first convolutional layer looks like:

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

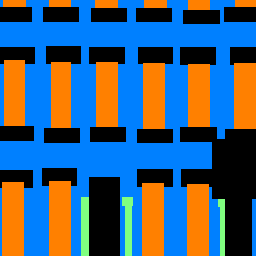

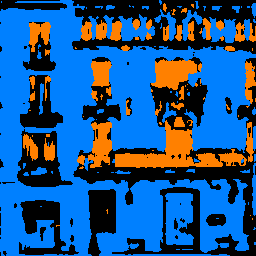

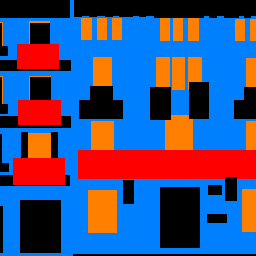

In this part, we will train a CNN to perform semantic segmentation on the Mini Facade dataset. For the CNN, we used the following architecture:

|

|

|

|

|

|

|

|

|