Ryan Meyer

CS 194-26 Project 5

Part A

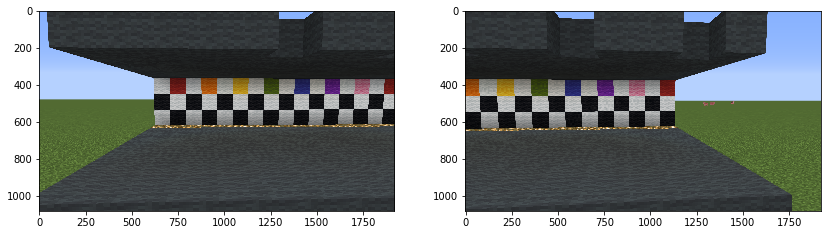

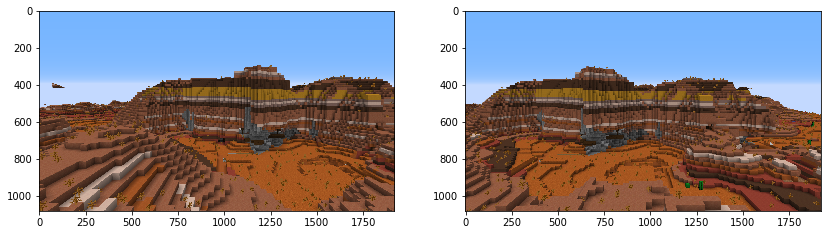

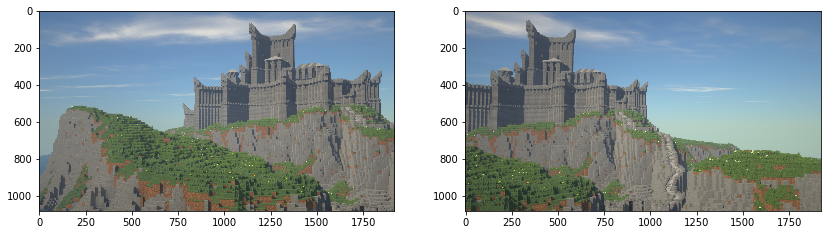

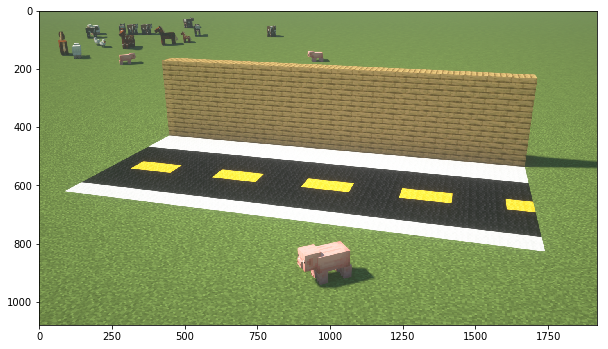

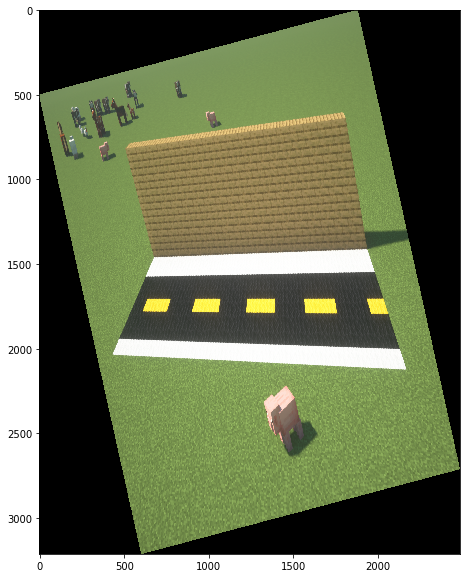

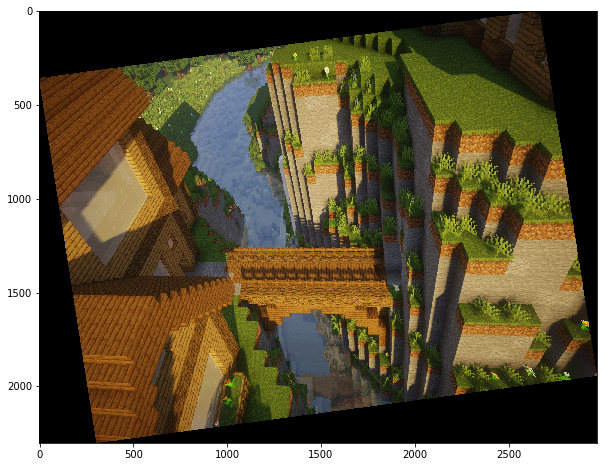

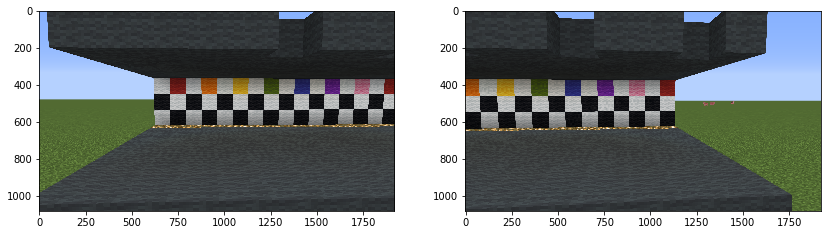

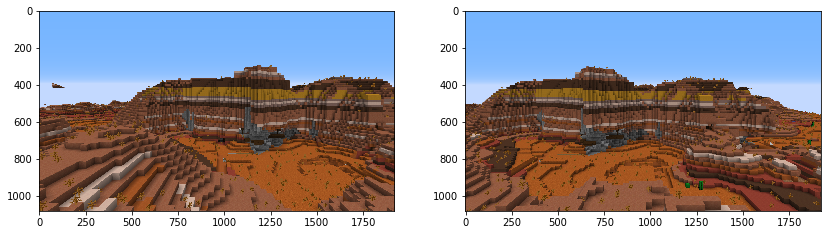

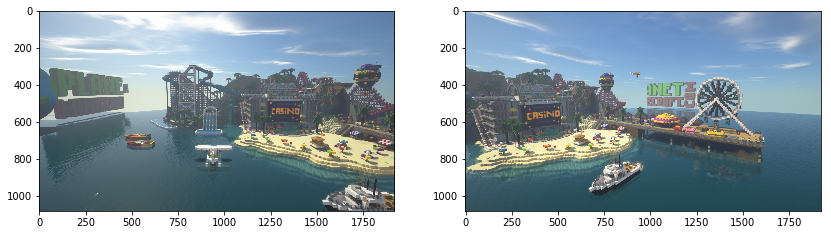

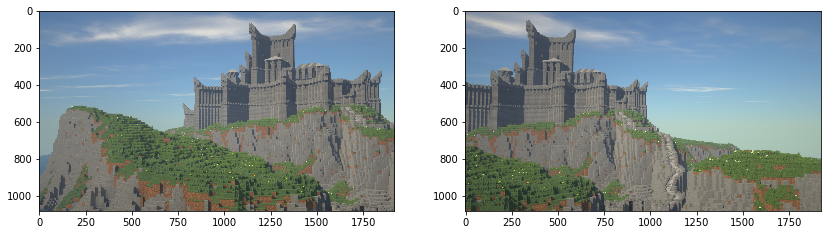

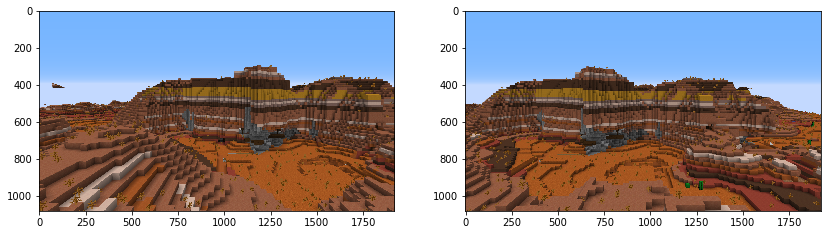

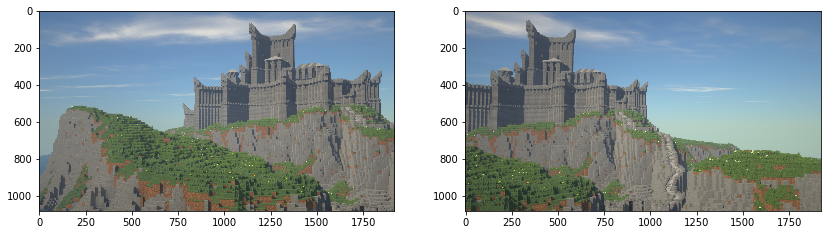

1) Shoot Pictures

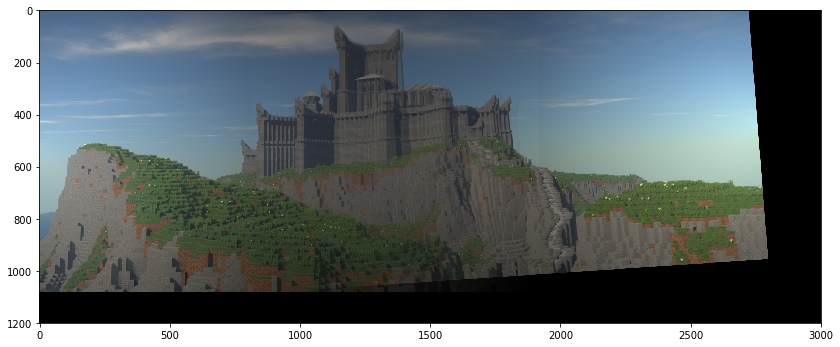

Here are all of the paired photos I took for this project. Due to the shelter in place currently in effect, I took all of my pictures inside the game Minecraft. This allowed me to build/use any structure, as well as know the planarity of structures, and easily change the field of view or location of my picture.

2) Recover Homographies, Image warping and rectification

For this section, I wrote code which can estimate the homographic transformation from one set of points to another using least squares to minimize the error in a linear equation of the transformation parameters. Then, I use inverse warping to transform the original images. By specifying points which are frontal-parallel, I can warp an image where the planar surfaces are misaligned with the camera view into an image where they are more closely aligned.

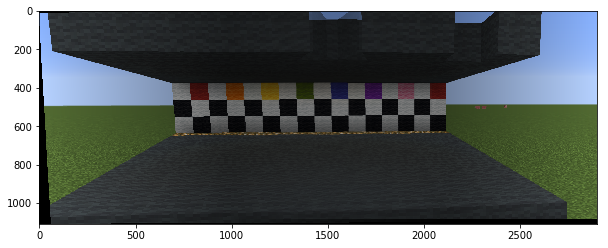

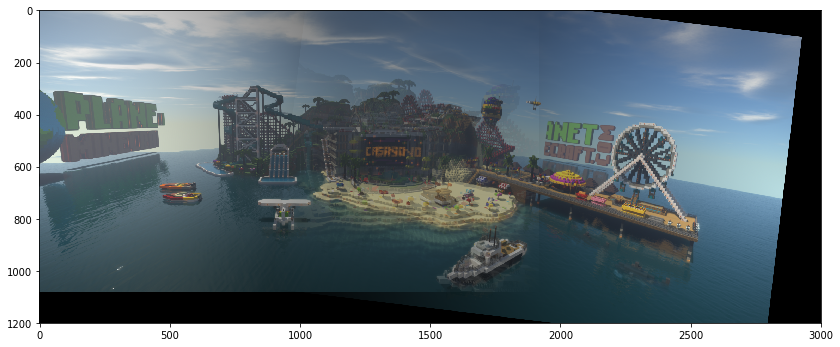

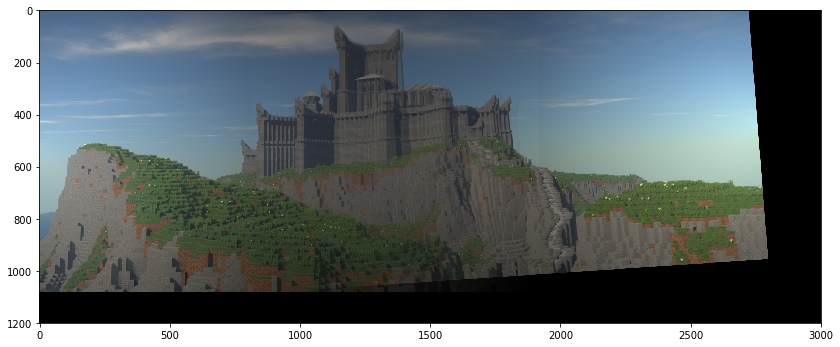

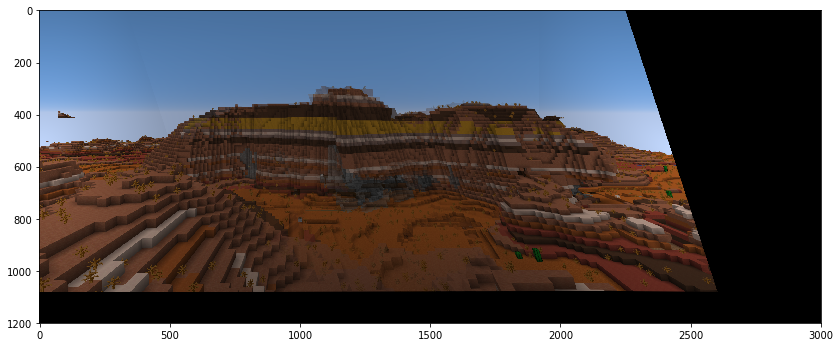

3) Mosiacs

By warping one of two images of different perspectives into the other, then blending the result, one can create mosiacs or panoramas. With manually specified correspondences, the final images are not perfectly aligned and have some ghosting, but I tried to get them as close as possible to the appearance of a single photo. For the next part of the project, I need to improve my blending and cropping of the warped images, and then implement an automatic point correspondence model.

Part B: Multi-Image Matching using Multi-Scale Oriented Patches

Using the process described in Brown, Szeliski, and Winder's paper "Multi-Image Matching using Multi-Scale Oriented Patches" or MOPs, I can automate and improve the task of selecting feature interest points to match between the two images.

1) Dectecting Corner Features in an Image

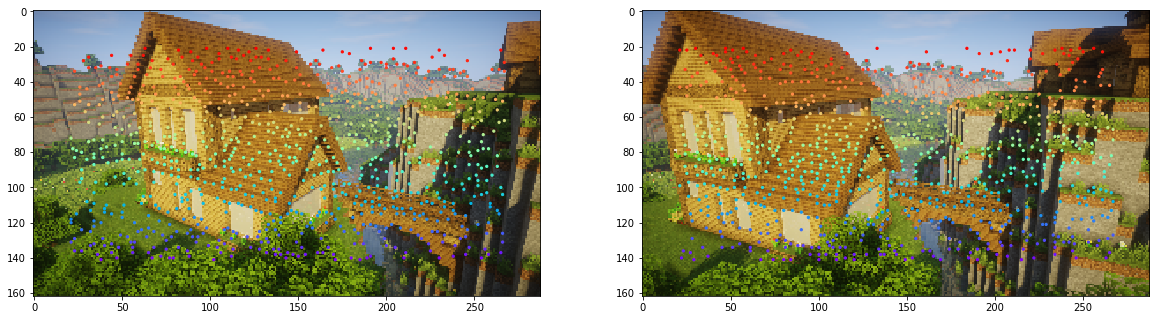

I start with Harris point detection to get as many possible points of interest as possible.

Next I restrict the number of corners using Adaptive Non-Maximal Suppression (ANMS), while ensuring the points are well spaced.

2) Extracting Feature Descriptors

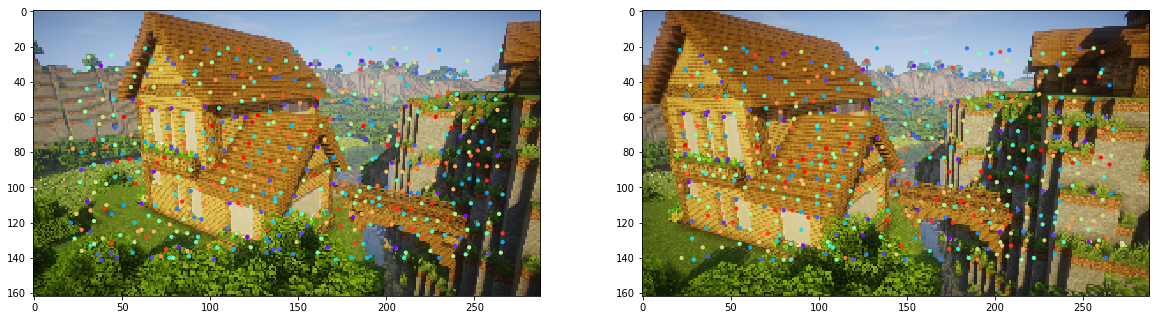

The next step is to sample 40x40 patches of pixels and subsample 8x8 patches within each sample to find interest point descriptors, and store the normalized samples.

3) Matching Feature Descriptors

Then to find corresponding features, I match pairs of features surrounding points of interest in both images, limiting the final number of matches under a threshold. This threshold is different for each set of images.

4) RANSAC for Computing the Best Homography

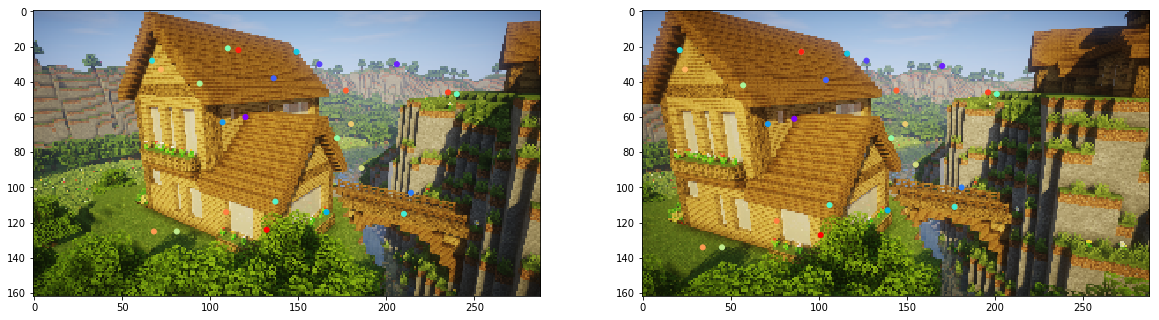

To finalize the set of points used to compute a homography for warping the images, I use the RANSAC algorithm to reject outlier pairs.

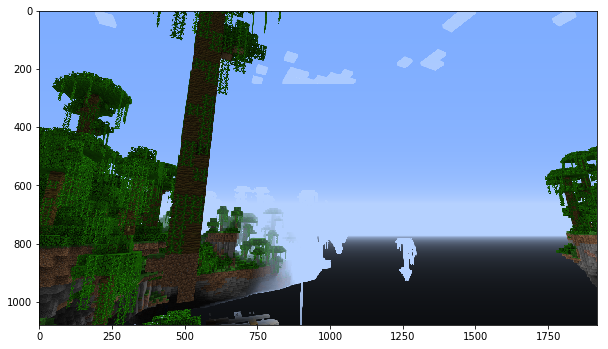

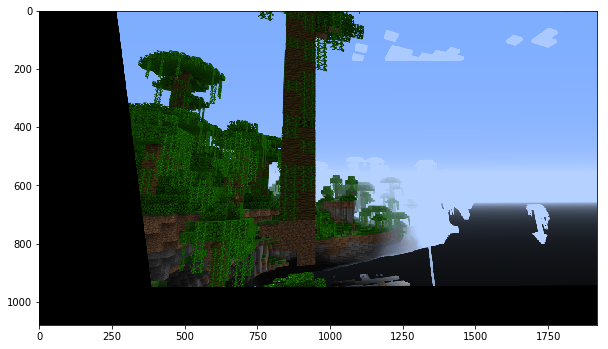

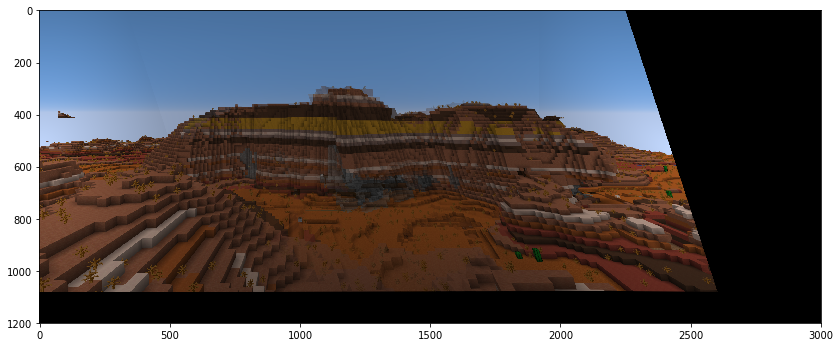

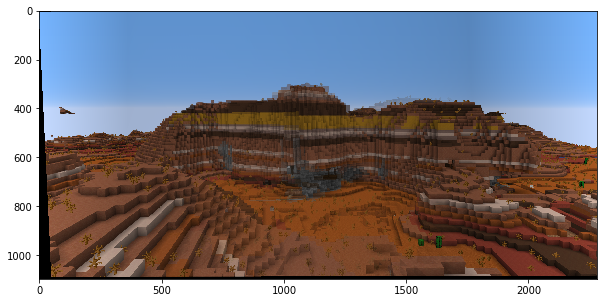

5) Mosaics

After computing the homography, I warp then blend the images together as before to create a mosaic/panorama.

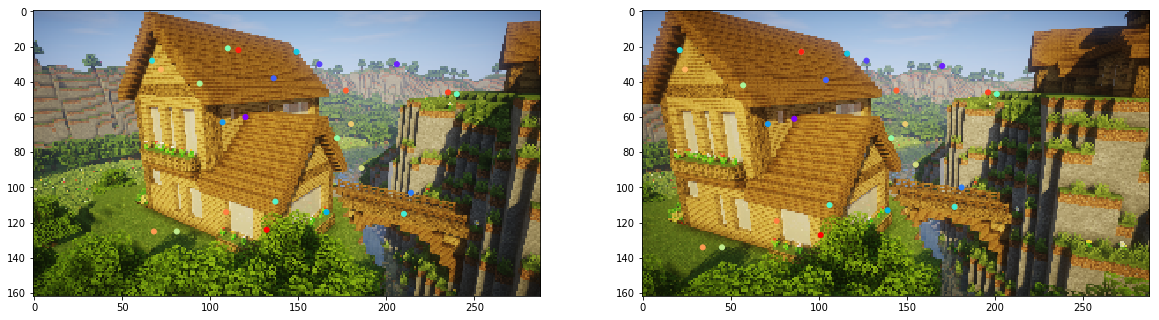

Additional mosaics.

Commentary on results

This project, especially part B, was very challenging for me. I think I successfully implemented the process discussed in the MOPs paper, but clearly the feature matching and/or RANSAC steps need some tweaking and may have some bugs, since sometimes my resulting set of points would still contain a few outlier points. I had to keep the threshold for the number of features low to ensure that the final mosaics were not completely wrongly warped due to the potential outliers. This is why the final mosaics ended up blurrier than I would like.