Pair A

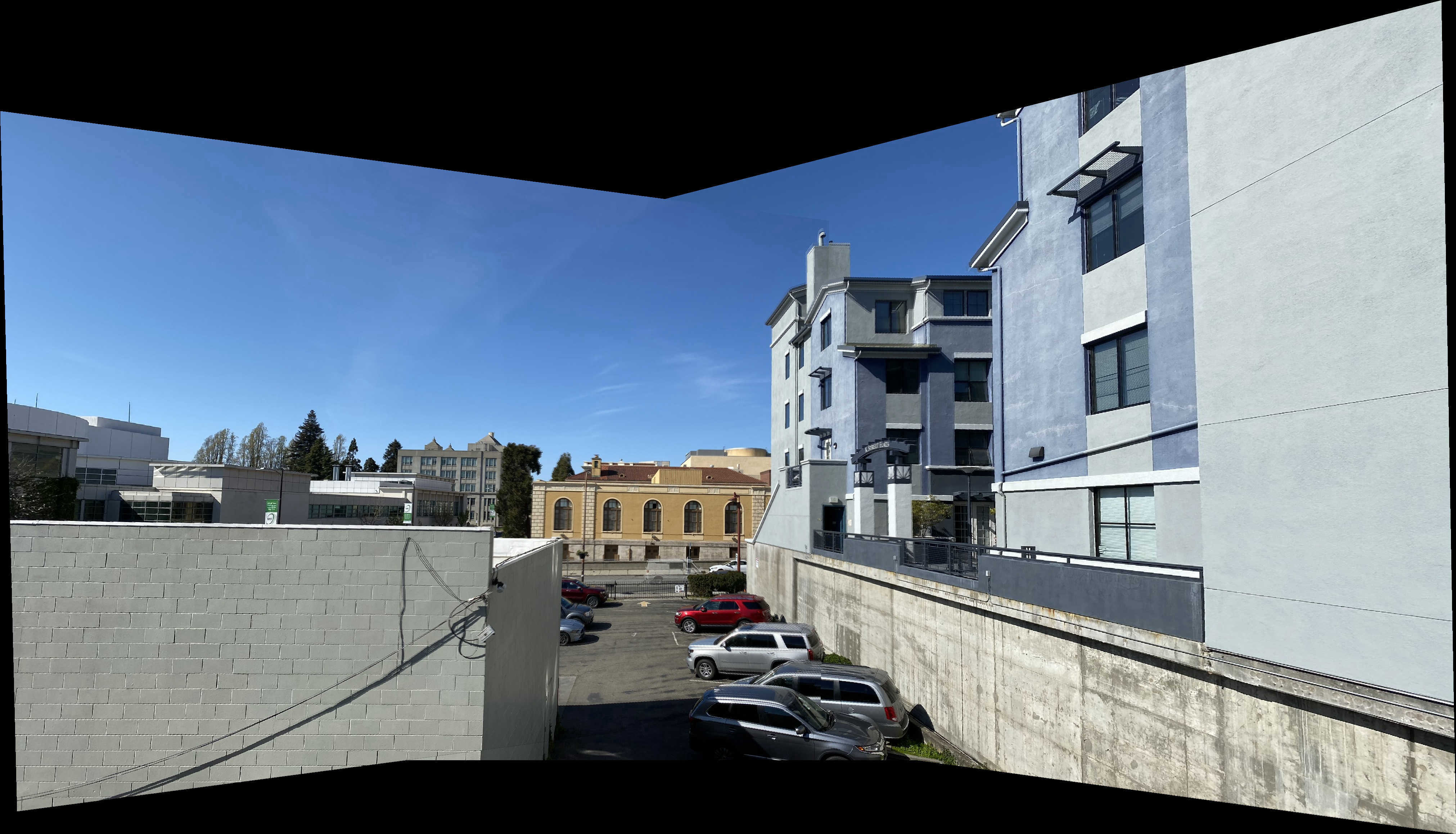

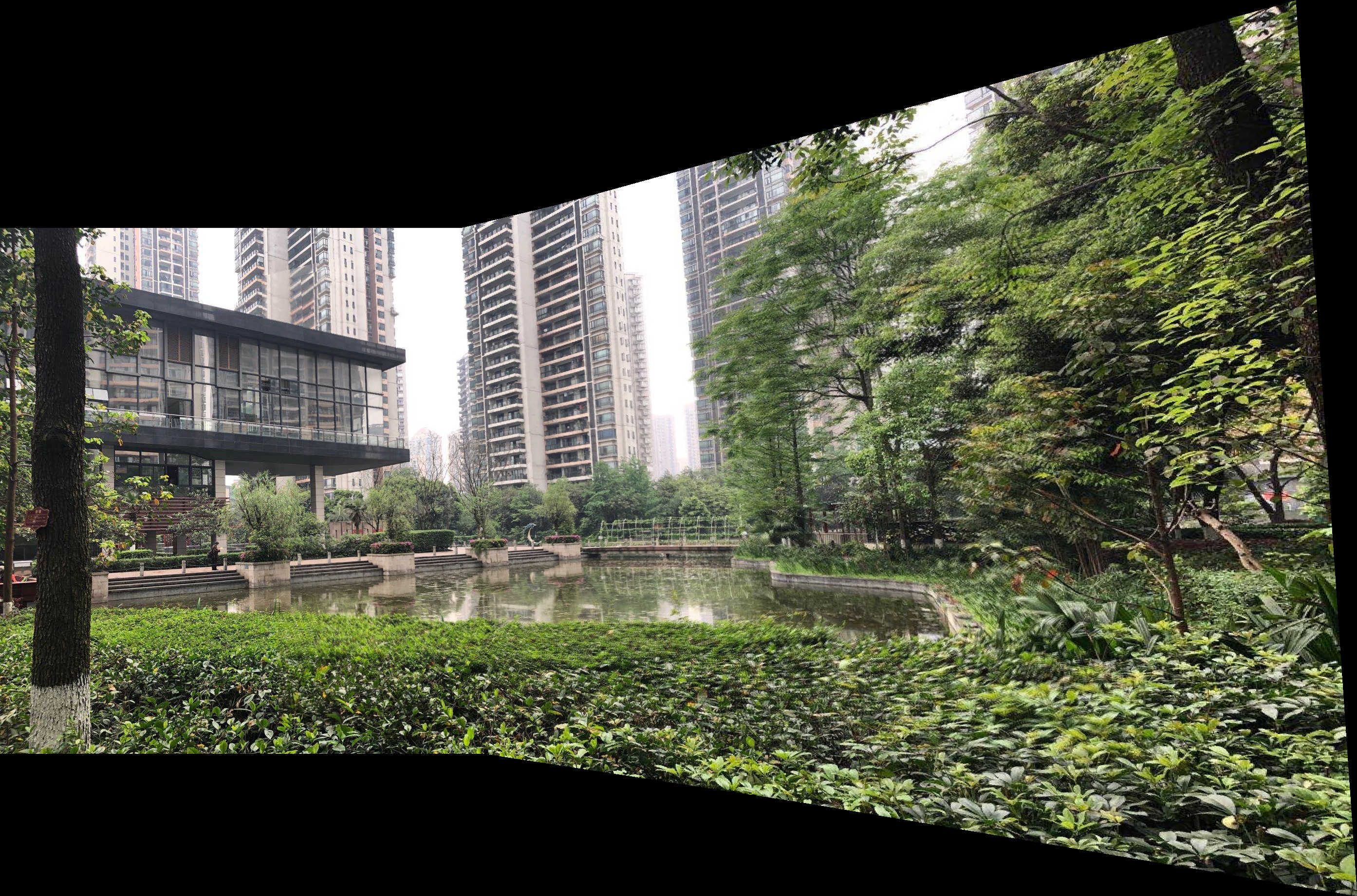

I took two pairs of pictures using iPhone with exposure and focus locking.

First, I use ginput in Python to providing point matches.

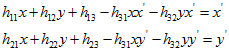

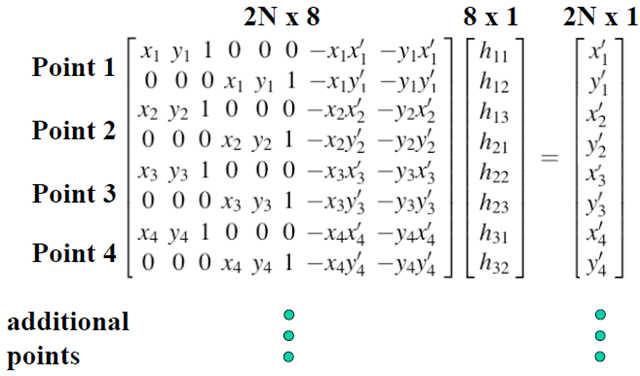

In our case, the transformation is a homography: p’=Hp, where H is a 3x3 matrix with 8 degrees of freedom (lower right corner is a scaling factor and can be set to 1). One way to recover the homography is via a set of (p’,p) pairs of corresponding points taken from the two images.

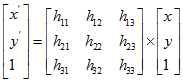

By setting lower right corner factor h33 to 1, the following equations can be derived:

Re-express with matrix:

This equation can be solved using least-squares:

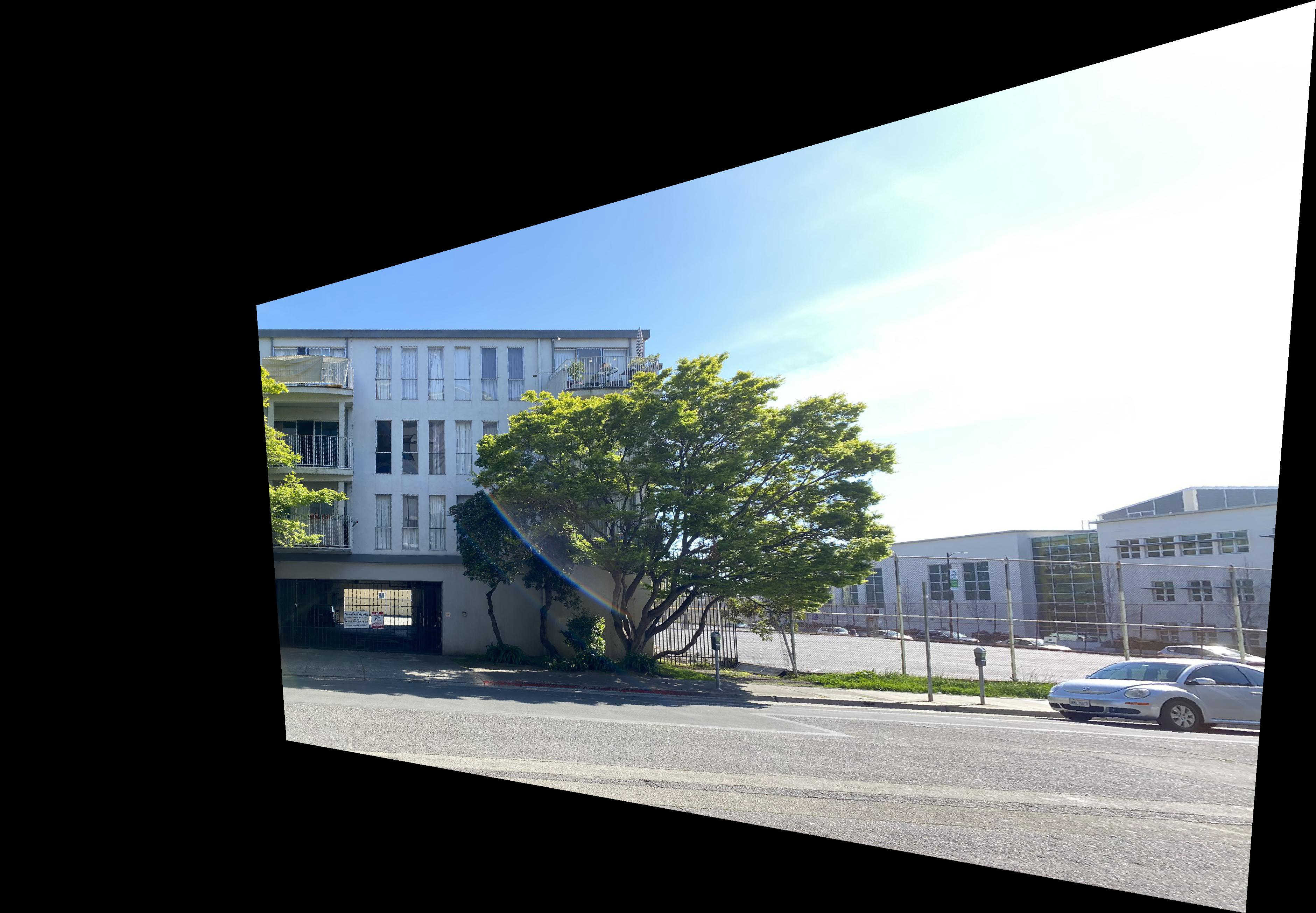

I used inverse warping to warp the images. First, I predict the bounding box by piping the four corners of the image through H. For each pixel in the warped bounding box, I computed the inverse warp and find the original value in original image.(without loop in Python)

Because I know that the window is square, I clicked on the four corners of a window and store them in im1_pts while im2_pts I defined by hand to be a square.

I used masks to blend images. The mask is to set it to 1 at the center of each image and it fall off until it hits 0 at the edges.

Pair B did not blend well, because the lightness changed in the two pictures.

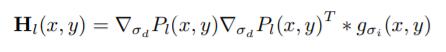

The interesting points are Harris corners in an image. The Harris matrix at position (x, y) is the smoothed outer product of the gradients.

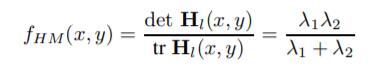

To find interest points, we first compute the “corner strength” function

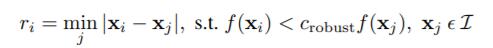

Using Adaptive Non-Maximal Suppression mentioned in "Multi-Image Matching using Multi-Scale Oriented Patches", we can obtain the 500 interesting points by computing r_i:

Given an oriented interest point, we sample a 8 × 8 patch of pixels around the sub-pixel location of the interest point, using a spacing of s = 5 pixels between samples. After sampling, the descriptor vector is normalised so that the mean is 0 and the standard deviation is 1. This makes the features invariant to affine changes in intensity (bias and gain).

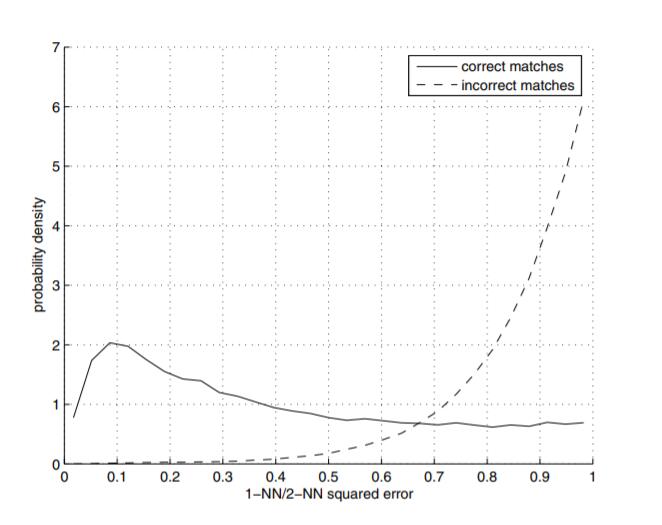

According to "Multi-Image Matching using Multi-Scale Oriented Patches", e1−NN /e2−NN is a good indicator of corner matching. Here e1−NN denotes the error for the best match (first nearest neighbour) and e2−NN denotes the error for the second best match (second nearest neighbour). I set the threshold to be 0.5.

Follow the algorithm as follows:

Using Gaussian image pyramid to detect corners from 5 levels. And each level has its own feature description.

For each interest point, we also compute an orientation θ, where the orientation vector [cos θ,sin θ] = u/|u| comes from the smoothed local gradient

By computing the homogeneous matrix of each pair, we can get an ralation graph. By cluster the images connected, we can recognize all the panoramas.

AUTOSTITCHING is really interesting. I haved learned all these interesting algorithms to get a perfect panorama. This step-by-step process let me totally understand these computer vision algorithms.