Xuanbai Chen,cs194-26-ags

I choose Seam Carving as my first project. In this project, I will reimplement the Seam Carving for Content-Aware Image Resizing by Shai Avidan and Ariel Shamir.

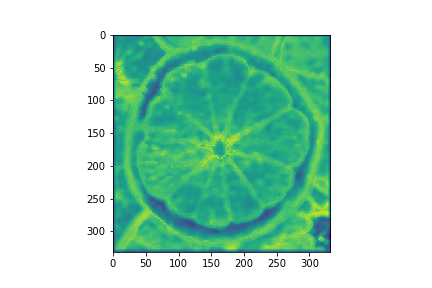

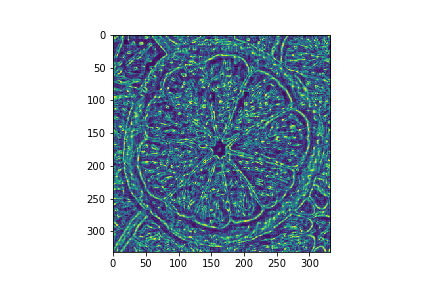

In order to seam carving in vertical or horizontal order, we need to define energy function to find which line(column) should be removed. We cna just use the Sobel operator to compute the basic energy function as described in the paper.

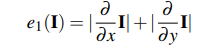

After getting the basic energy image, we should use dynamic programming to compute the matrix M and matrix backtrack to determine which line should be removed.

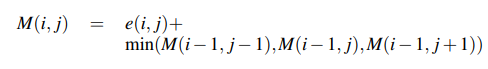

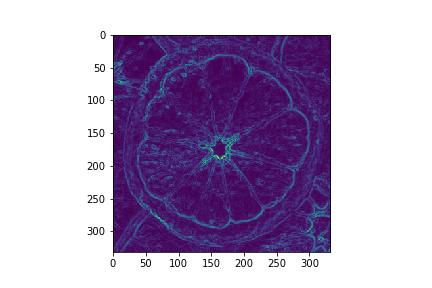

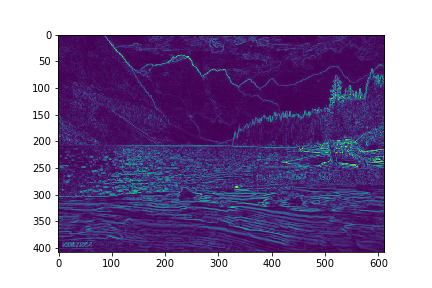

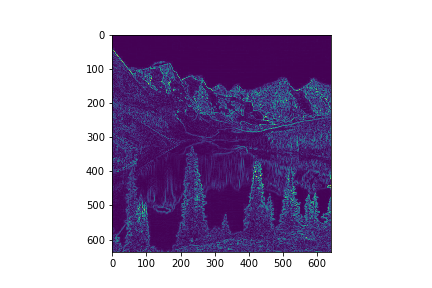

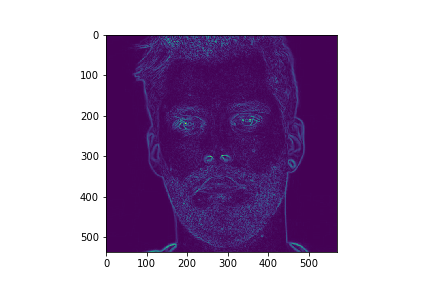

Origin Images : Energy Images

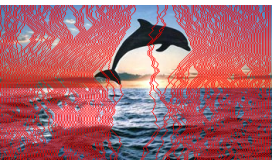

Seam Carving Images

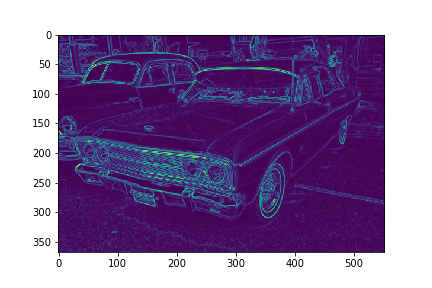

Origin Images : Energy Images

Seam Carving Images

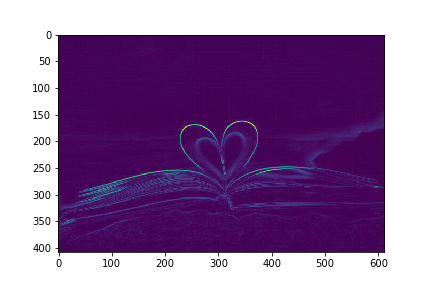

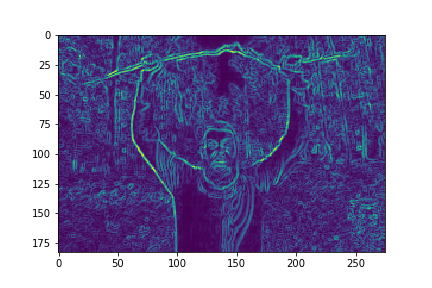

Origin Images : Energy Images

Seam Carving Images

Origin Images : Energy Images

Seam Carving Images

Origin Images : Energy Images

Seam Carving Images

Origin Images : Energy Images

Seam Carving Images

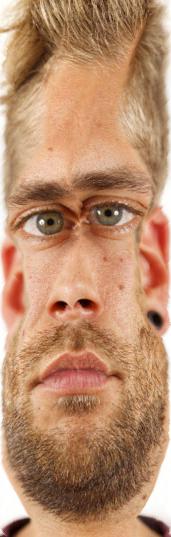

Generally, highly-structured scenes and human faces will suffer from artifacts, while scenes with textured objects on simple backgrounds will work better. Some of the images I use above will also produce artifacts since some of the images are full of textured objects. I also test some images with human face, and it will cause artifacts indeed.

Origin Images : Energy Images

Seam Carving Images

Origin Images : Energy Images

Seam Carving Images

Some Other Failed Images

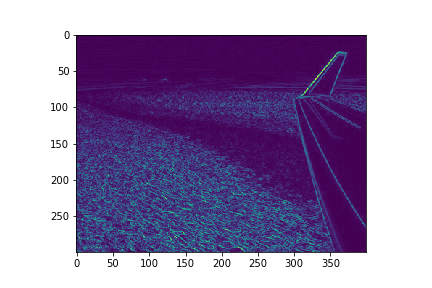

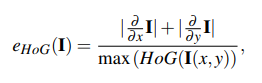

In this part, I tried two different kinds of energy function to test the result.(Entropy and HoG). The entropy energy computes the entropy over a 9×9 window and adds it to e1. eHoG is defined as follows:

Entropy Images

HoG Images

In this part,we should stretch images not equally,since some of the pixel have more energy. We should stretch images with less energy. We should stretch bottom K columns just as the images below shows:

I stretch the two images below by a factor of 1.2 and 1.5.

I learned how to stretch images by not simple scale the original images.

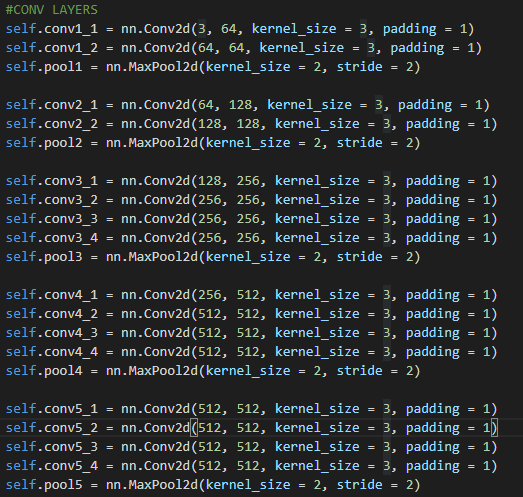

In this project, we need to reimplement th e paper of A Neural Algorithm of Artistic Style whcih use one images style and another image's Content and combine them together.

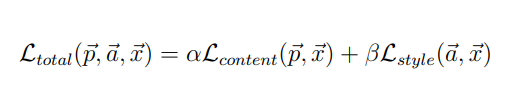

The main loss function is as below:

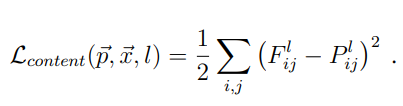

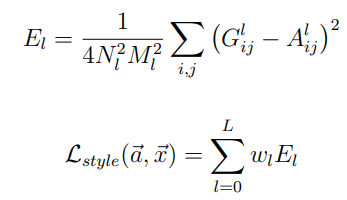

While the content loss is just the squared-error loss , the style loss is by using different layer of VGG19 to compute the difference of Gram matrix of the image to be generated and original images.

The model architecture is as below. Instead of using average pooling as the paper use, I use max pooling on the end of each layer. The channels of kernel are 64, 128, 256, 512, 512 respectively.The kernel size is 3 and padding is 1.

Optimizer : L-BFGS algorithm

Learning Rate : 1

Epoch : 500

Content Weight : 1

Style Weight : 1000

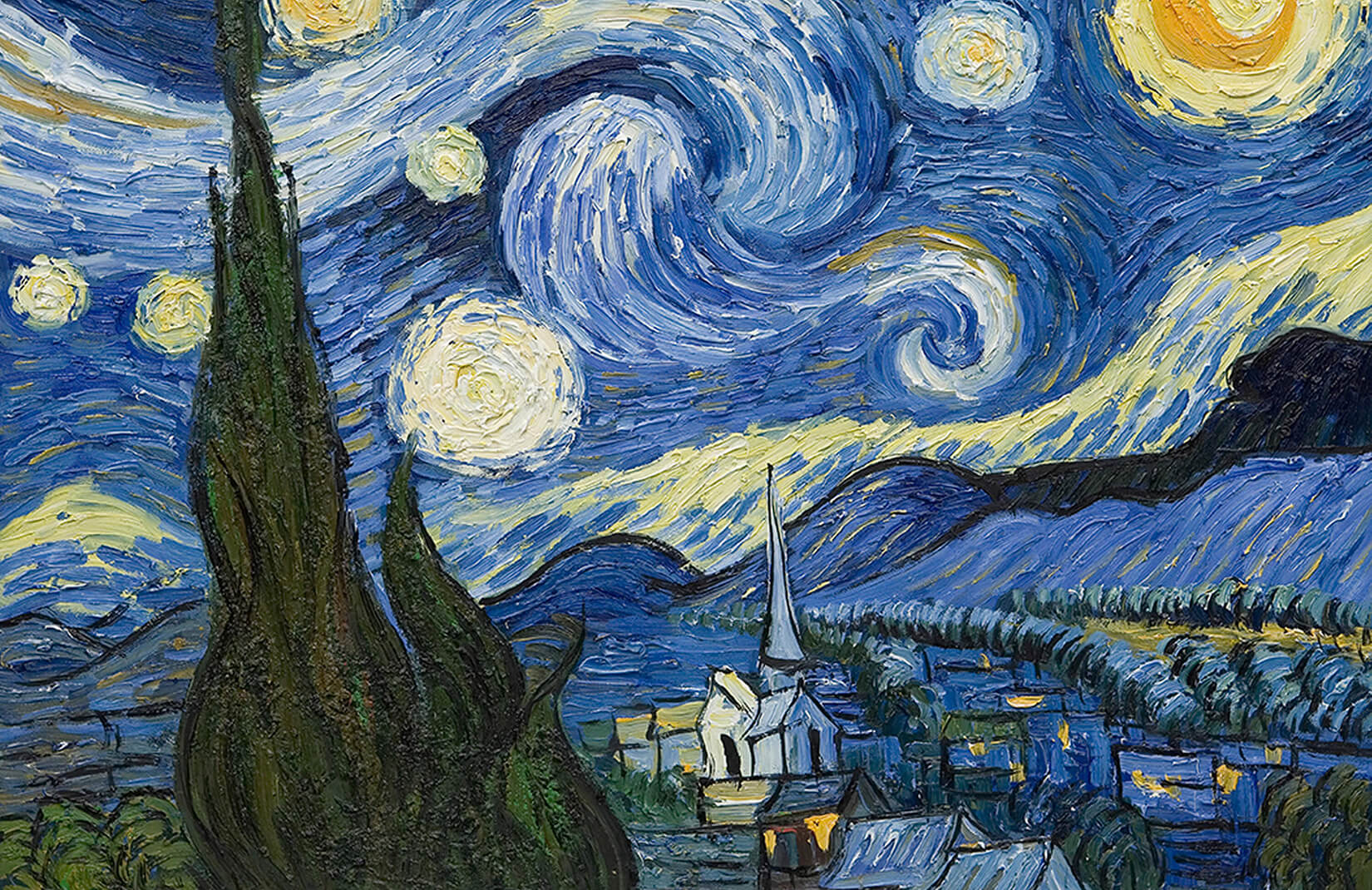

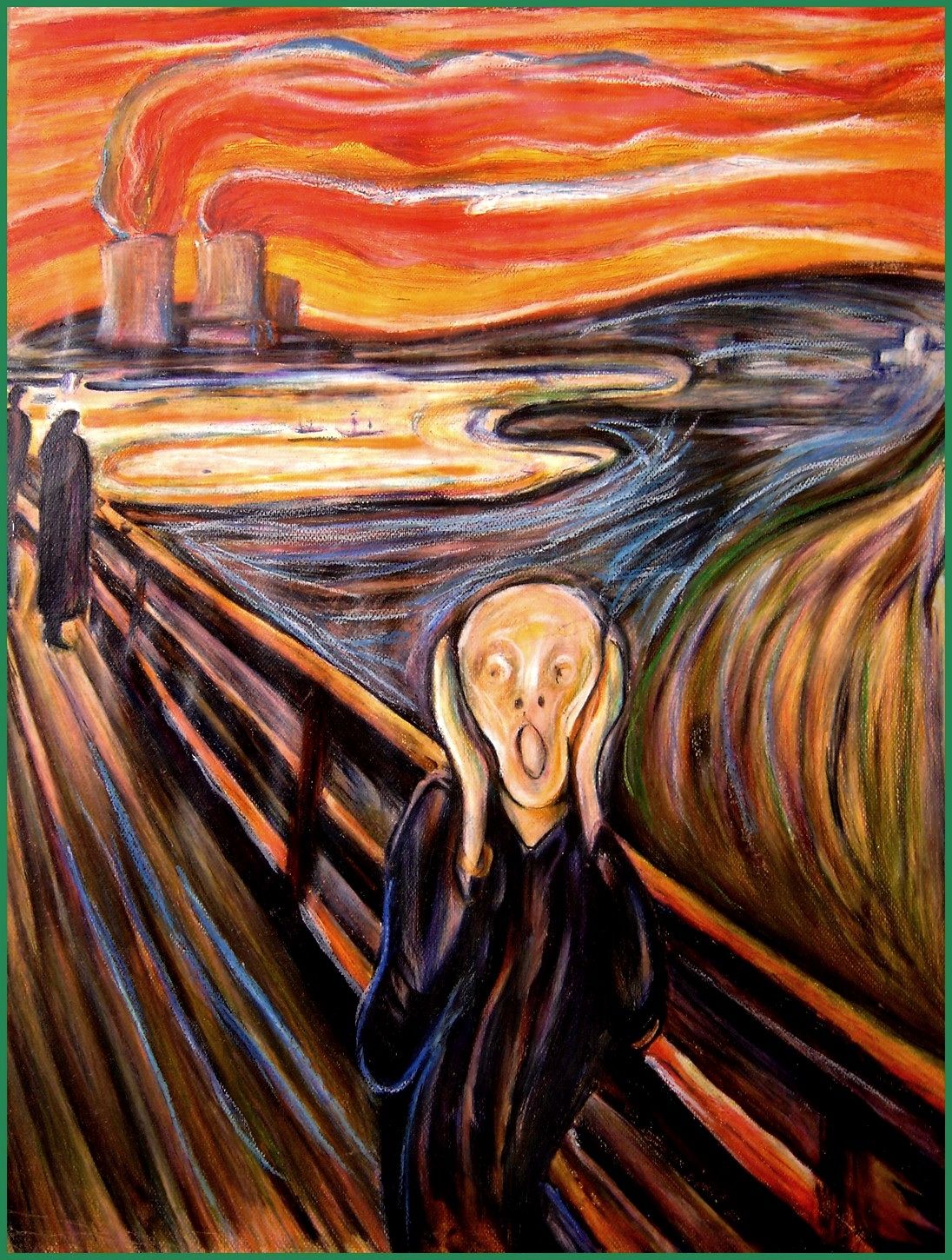

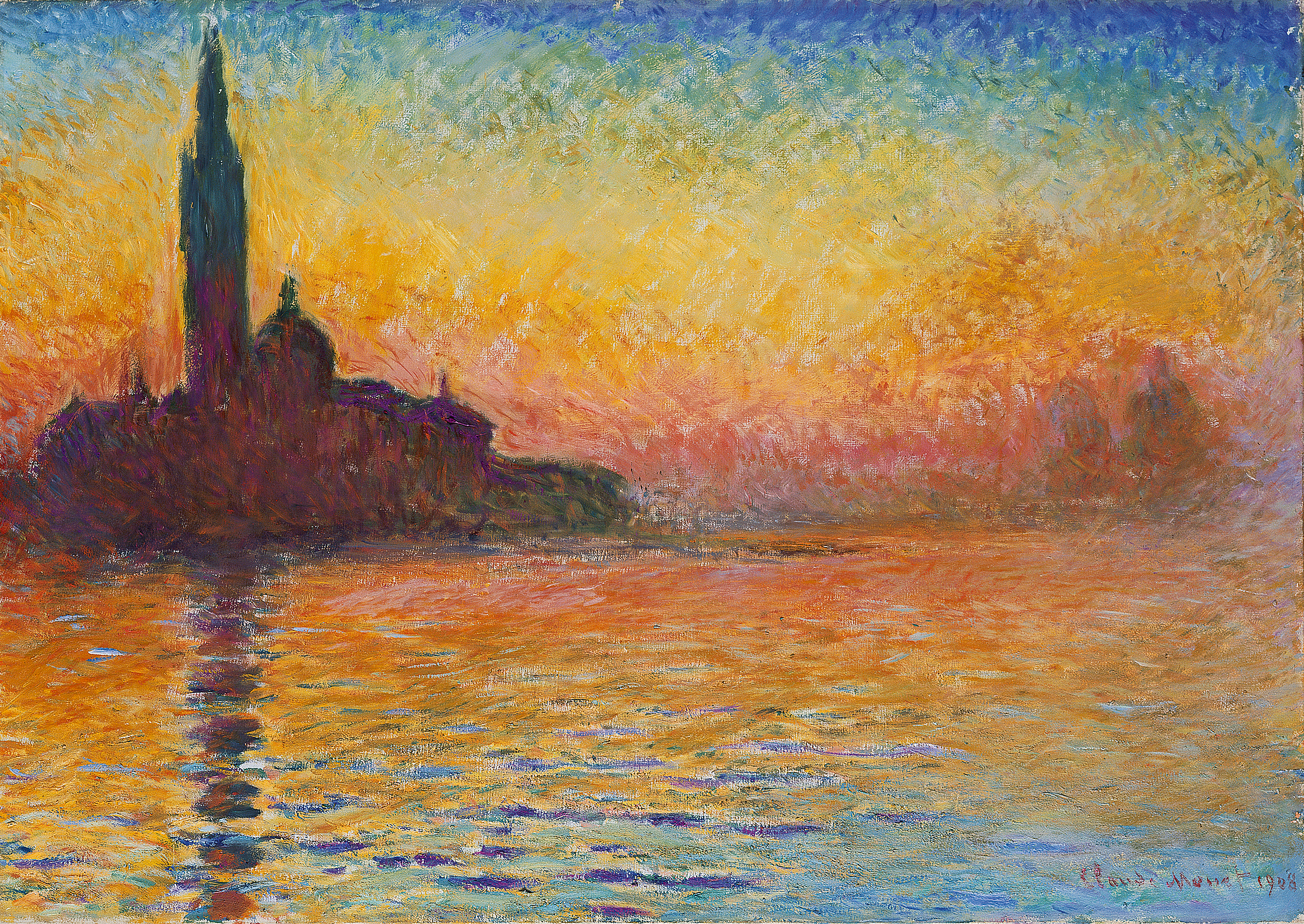

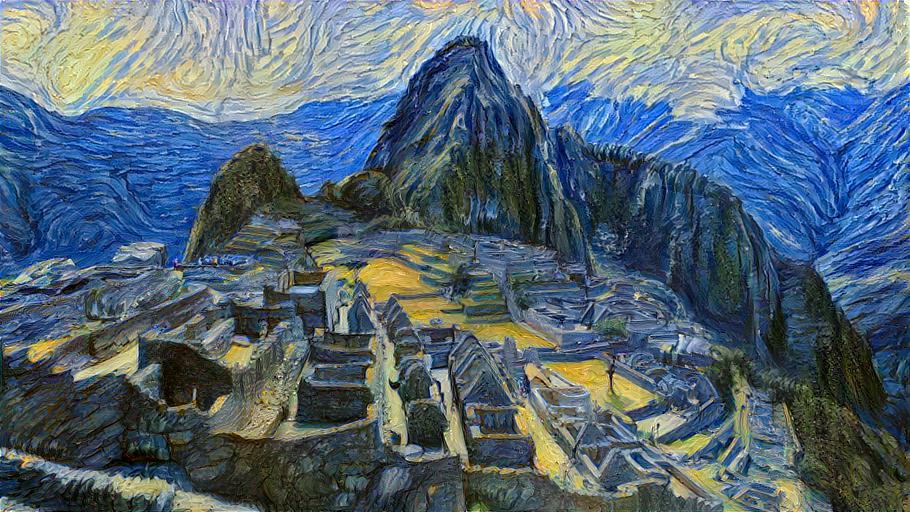

I select 4 content images and the first one is the one that shows in the paper. I also select 6 style images and the first four images are those which shows in the paper and last two are styles that I choose.

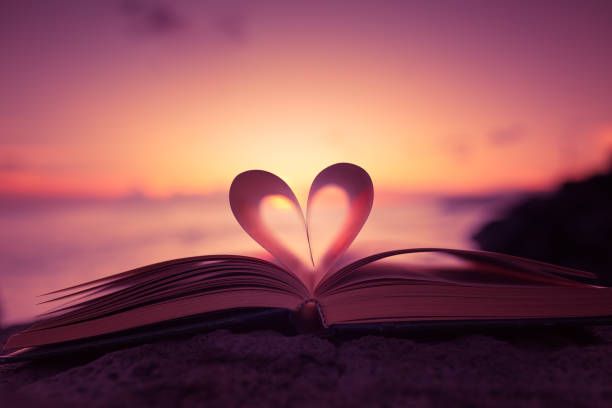

Content Images

Style Images

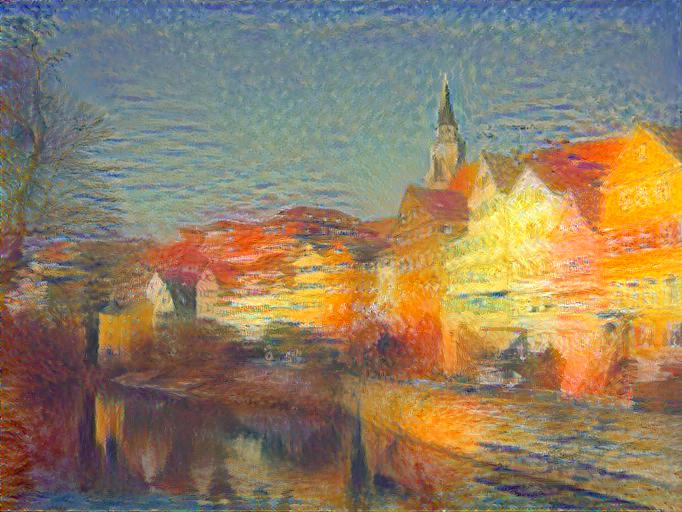

Besides transfer Neckarfront 4 styles as proposed in the paper, I will also transfer Neckarfront to other two styles.

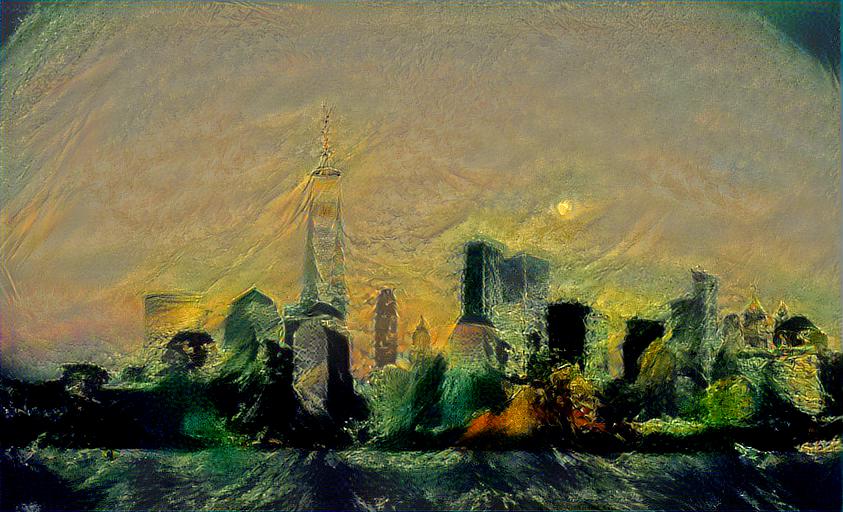

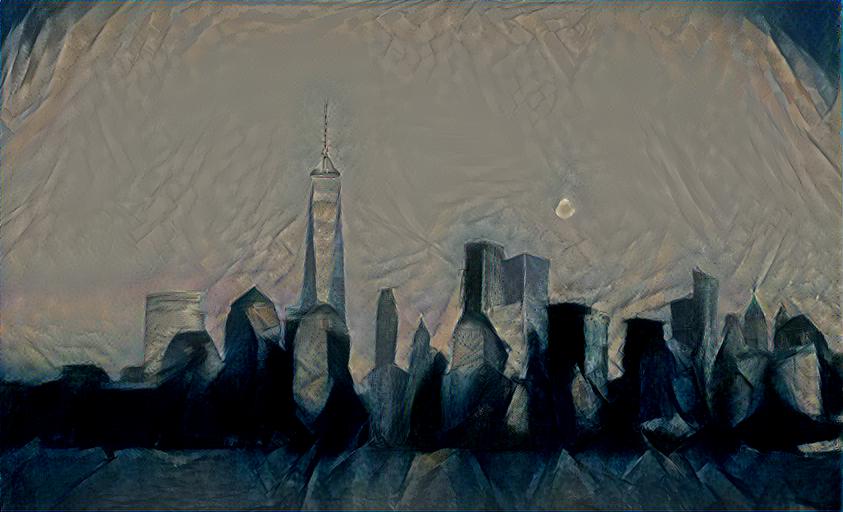

I will test the other 3 images on those 6 styles.

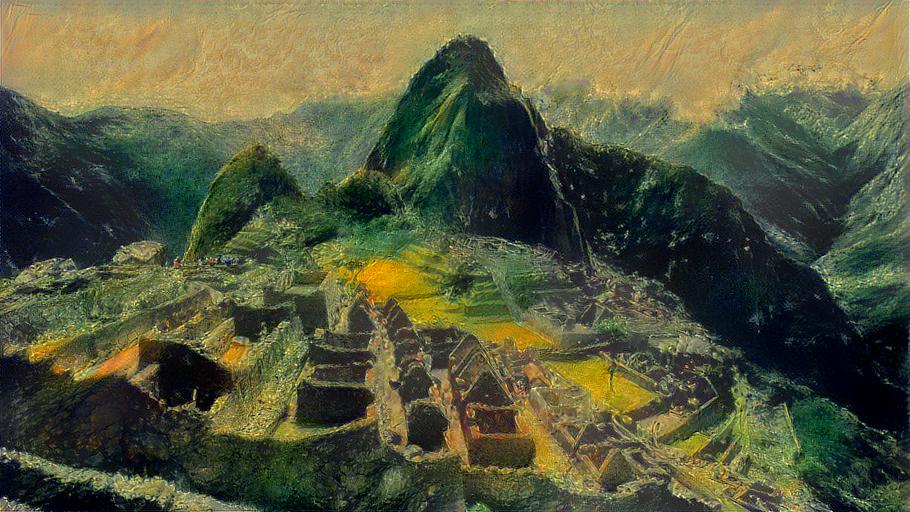

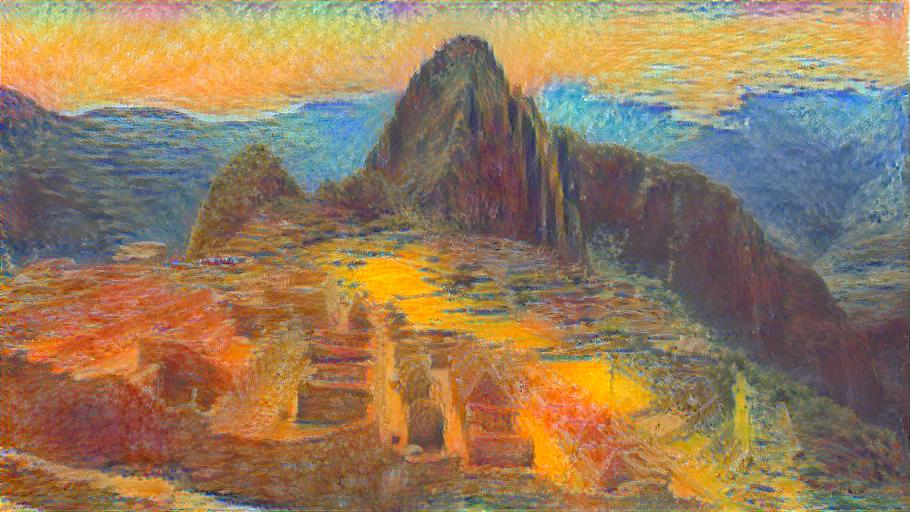

The first image

The second image

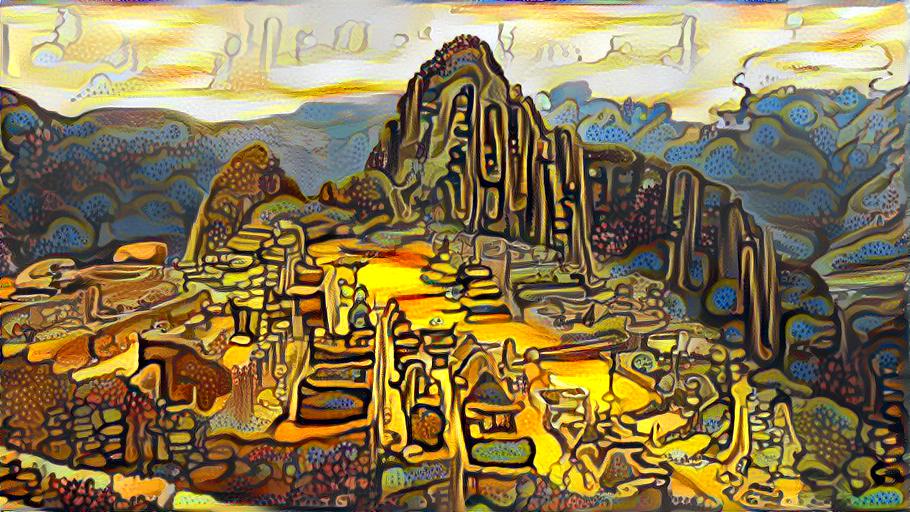

The third image

This image failed in some ways since all the scene in the sky is gray. The reason is probably that the CNN don't know how to put color on it. The original image has nothing in the sky and they are just blue. The value of pixels in the sky are too flat to tell the difference so the CNN couldn't know hot to put different color in that region.

I really learned a ton in this project and got surprised by the magic of CNN.