Project 3: Ghostbusters (Part I)

Running won't save you from my

Particle filter!

Table of contents

- Introduction

- Ghostbusters and Bayes Nets

- Question 1 (2 points): Bayes Net Structure

- Question 2 (3 points): Join Factors

- Question 3 (2 points): Eliminate (not ghosts yet)

- Question 4 (2 points): Variable Elimination

- Submission

Introduction

Pacman spends his life running from ghosts, but things were not always so. Legend has it that many years ago, Pacman’s great grandfather Grandpac learned to hunt ghosts for sport. However, he was blinded by his power and could only track ghosts by their banging and clanging.

In this project, you will design Pacman agents that use sensors to locate and eat invisible ghosts. You’ll advance from locating single, stationary ghosts to hunting packs of multiple moving ghosts with ruthless efficiency.

This project includes an autograder for you to grade your answers on your machine. This can be run on all questions with the command:

python autograder.py

It can be run for one particular question, such as q2, by:

python autograder.py -q q2

It can be run for one particular test by commands of the form:

python autograder.py -t test_cases/q1/1-ObsProb

The code for this project contains the following files. You can download all the code and supporting files from this GitHub repository.

| Files you'll edit: | |

inference.py |

Code for tracking ghosts over time using their sounds. |

factorOperations.py |

Operations to compute new joint or magrinalized probability tables. |

| Files you might want to look at: | |

bustersAgents.py |

Agents for playing the Ghostbusters variant of Pacman. |

bayesNet.py |

The BayesNet and Factor classes. |

| Supporting files you can ignore: | |

busters.py |

The main entry to Ghostbusters (replacing Pacman.py). |

bustersGhostAgents.py |

New ghost agents for Ghostbusters. |

distanceCalculator.py |

Computes maze distances, caches results to avoid re-computing. |

game.py |

Inner workings and helper classes for Pacman. |

ghostAgents.py |

Agents to control ghosts. |

graphicsDisplay.py |

Graphics for Pacman. |

graphicsUtils.py |

Support for Pacman graphics. |

keyboardAgents.py |

Keyboard interfaces to control Pacman. |

layout.py |

Code for reading layout files and storing their contents. |

util.py |

Utility functions. |

Files to Edit and Submit: You will fill in portions of inference.py, and factorOperations.py during the assignment. Once you have completed the assignment, you will submit a token generated by submission_autograder.py to Gradescope. The token contains these your code and the outputs generated by your code on some test cases. Please do not change the other files in this distribution.

Evaluation: Your code will be autograded for technical correctness. Please do not change the names of any provided functions or classes within the code, or you will wreak havoc on the autograder. However, the correctness of your implementation – not the autograder’s judgements – will be the final judge of your score. If necessary, we will review and grade assignments individually to ensure that you receive due credit for your work.

Academic Dishonesty: We will be checking your code against other submissions in the class for logical redundancy. If you copy someone else’s code and submit it with minor changes, we will know. These cheat detectors are quite hard to fool, so please don’t try. We trust you all to submit your own work only; please don’t let us down. If you do, we will pursue the strongest consequences available to us.

Getting Help: You are not alone! If you find yourself stuck on something, contact the course staff for help. Office hours, section, and the discussion forum are there for your support; please use them. If you can’t make our office hours, let us know and we will schedule more. We want these projects to be rewarding and instructional, not frustrating and demoralizing. But, we don’t know when or how to help unless you ask.

Discussion: Please be careful not to post spoilers.

Ghostbusters and Bayes Nets

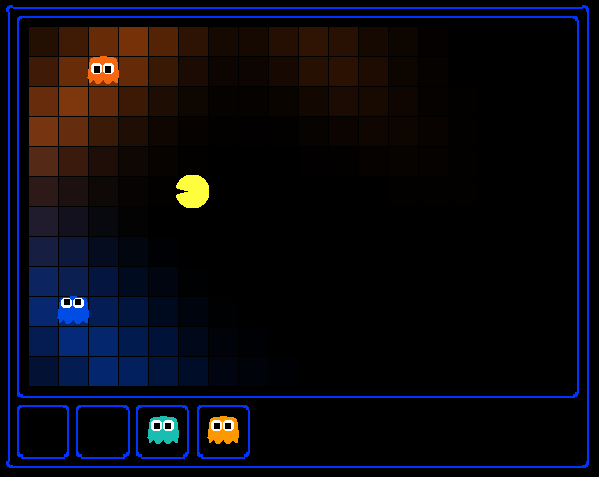

In the CS 188 version of Ghostbusters, the goal is to hunt down scared but invisible ghosts. Pacman, ever resourceful, is equipped with sonar (ears) that provides noisy readings of the Manhattan distance to each ghost. The game ends when Pacman has eaten all the ghosts. To start, try playing a game yourself using the keyboard.

python busters.py

The blocks of color indicate where the each ghost could possibly be, given the noisy distance readings provided to Pacman. The noisy distances at the bottom of the display are always non-negative, and always within 7 of the true distance. The probability of a distance reading decreases exponentially with its difference from the true distance.

In the first part of the project, you will implement basic building blocks of Bayes nets, which will be the useful for the second part of the project.

Bayes Nets and Factors

First, take a look at bayesNet.py to see the classes you’ll be working with – BayesNet and Factor. You can also run this file to see an example BayesNet and associated Factors: python bayesNet.py.

You should look at the printStarterBayesNet function – there are helpful comments that can make your life much easier later on.

The Bayes Net created in this function is shown below:

(Raining –> Traffic <– Ballgame)

A summary of the terminology is given below:

- Bayes Net: This is a representation of a probabilistic model as a directed acyclic graph and a set of conditional probability tables, one for each variable, as shown in lecture. The Traffic Bayes Net above is an example.

- Factor: This stores a table of probabilities, although the sum of the entries in the table is not necessarily 1. A factor is of the general form \(f(X_1, \dots, X_m,y_1, \dots,y_n\mid Z_1, \dots, Z_p, w_1, \dots, w_q)\). Recall that lower case variables have already been assigned. For each possible assignment of values to the \(X_i\) and \(Z_j\) variables, the factor stores a single number. The \(Z_j\) and \(w_k\) variables are said to be conditioned while the \(X_i\) and \(y_l\) variables are unconditioned.

- Conditional Probability Table (CPT): This is a factor satisfying two properties:

- Its entries must sum to 1 for each assignment of the conditional variables.

- There is exactly one unconditioned variable. The Traffic Bayes Net stores the following CPTs: \(\Pr(\mathrm{Raining})\), \(\Pr(\mathrm{Ballgame})\), \(\Pr(\mathrm{Traffic} \mid \mathrm{Ballgame},\mathrm{Raining})\).

Question 1 (2 points): Bayes Net Structure

Implement the constructBayesNet function in inference.py. It constructs an empty Bayes Net with the structure described below. A Bayes Net is incomplete without the actual probabilities, but factors are defined and assigned by staff code separately; you don’t need to worry about it. If you are curious, you can take a look at an example of how it works in printStarterBayesNet in bayesNet.py. Reading this function can also be helpful for doing this question.

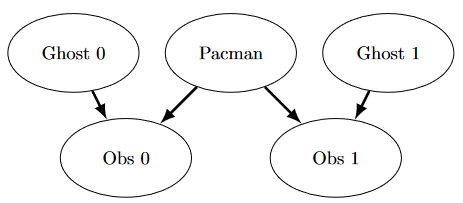

The simplified ghost hunting world is generated according to the following Bayes net:

Don’t worry if this looks complicated! We’ll take it step by step. As described in the code for constructBayesNet, we build the empty structure by listing all of the variables, their values, and the edges between them. This figure shows the variables and the edges, but what about their domains?

- Add variables and edges based on the diagram.

- Pacman and the two ghosts can be anywhere in the grid (we ignore walls for this). Add all possible position tuples for these.

- Observations here are non-negative, equal to Manhattan distances of Pacman to ghosts \(\pm\) noise.

Grading: To test and debug your code, run

python autograder.py -q q1

Question 2 (3 points): Join Factors

Implement the joinFactors function in factorOperations.py. It takes in a list of Factors and returns a new Factor whose probability entries are the product of the corresponding rows of the input Factors.

joinFactors can be used as the product rule, for example, if we have a factor of the form \(\Pr(X\mid Y)\) and another factor of the form \(\Pr(Y)\), then joining these factors will yield \(\Pr(X,Y)\). So, joinFactors allows us to incorporate probabilities for conditioned variables (in this case, \(Y\)). However, you should not assume that joinFactors is called on probability tables – it is possible to call joinFactors on Factors whose rows do not sum to 1.

Grading: To test and debug your code, run

python autograder.py -q q2

It may be useful to run specific tests during debugging, to see only one set of factors print out. For example, to only run the first test, run:

python autograder.py -t test_cases/q2/1-product-rule

Hints and Observations:

- Your

joinFactorsshould return a newFactor. - Here are some examples of what

joinFactorscan do:joinFactors\((\Pr(X \mid Y), \Pr(Y)) = \Pr(X,Y)\)joinFactors\((\Pr(V,W \mid X,Y,Z), \Pr(X,Y \mid Z)) = \Pr(V,W,X,Y \mid Z)\)joinFactors\((\Pr(X \mid Y,Z), \Pr(Y)) = \Pr(X,Y \mid Z)\)joinFactors\((\Pr(V \mid W), \Pr(X \mid Y), \Pr(Z)) = \Pr(V,X,Z \mid W,Y)\)

- For a general

joinFactorsoperation, which variables are unconditioned in the returnedFactor? Which variables are conditioned? Factors store avariableDomainsDict, which maps each variable to a list of values that it can take on (its domain). AFactorgets itsvariableDomainsDictfrom theBayesNetfrom which it was instantiated. As a result, it contains all the variables of theBayesNet, not only the unconditioned and conditioned variables used in theFactor. For this problem, you may assume that all the inputFactors have come from the sameBayesNet, and so theirvariableDomainsDictsare all the same.

Question 3 (2 points): Eliminate (not ghosts yet)

Implement the eliminate function in factorOperations.py. It takes a Factor and a variable to eliminate and returns a new Factor that does not contain that variable. This corresponds to summing all of the entries in the Factor which only differ in the value of the variable being eliminated.

Grading: To test and debug your code, run

python autograder.py -q q3

It may be useful to run specific tests during debugging, to see only one set of factors print out. For example, to only run the first test, run:

python autograder.py -t test_cases/q3/1-simple-eliminate

Hints and Observations:

- Your eliminate should return a new

Factor. eliminatecan be used to marginalize variables from probability tables. For example:eliminate\((\Pr(X,Y \mid Z), Y) = \Pr(X \mid Z)\)eliminate\((\Pr(X,Y \mid Z), X) = \Pr(Y \mid Z)\)

- For a general eliminate operation, which variables are unconditioned in the returned Factor? Which variables are conditioned?

- Remember that

Factors store thevariableDomainsDictof the originalBayesNet, and not only the unconditioned and conditioned variables that they use. As a result, the returnedFactorshould have the samevariableDomainsDictas the inputFactor.

Question 4 (2 points): Variable Elimination

Implement the inferenceByVariableElimination function in inference.py. It answers a probabilistic query, which is represented using a BayesNet, a list of query variables, and the evidence.

Grading: To test and debug your code, run

python autograder.py -q q4

It may be useful to run specific tests during debugging, to see only one set of factors print out. For example, to only run the first test, run:

python autograder.py -t test_cases/q4/1-disconnected-eliminate

Hints and Observations:

- The algorithm should iterate over hidden variables in elimination order, performing joining over and eliminating that variable, until the only the query and evidence variables remain.

- The sum of the probabilities in your output factor should sum to 1 (so that it is a true conditional probability, conditioned on the evidence).

- Look at the

inferenceByEnumerationfunction ininference.pyfor an example on how to use the desired functions. (Reminder: Inference by enumeration first joins over all the variables and then eliminates all the hidden variables. In contrast, variable elimination interleaves join and eliminate by iterating over all the hidden variables and perform a join and eliminate on a single hidden variable before moving on to the next hidden variable.) - You will need to take care of the special case where a factor you have joined only has one unconditioned variable (the docstring specifies what to do in greater detail).

Submission

This is the first part of the GhostBuster project.

In the next project, you will implement algorithms for performing both exact and approximate inference using Bayes Nets. The code of the next project will be based on your implementations of this project. So we do encouarge you to complete this project carefully and make sure you understand all the details.

After you have successfully passed all test cases in your local autograder, you’re ready to prepare your project for submission. To do this, follow the steps below:

-

Generate a submission token: Run the following command in your terminal to create a token for submission:

python submission_autograder.pyIf the script runs without any issues, it will produce a

bayesnets.tokenfile in your current directory. -

Submit your project: Take the

bayesnets.tokenfile and upload it to the “Project 3” entry on Gradescope.

Remember, the bayesnets.token file is crucial for your project submission. It verifies your work on the autograder and is necessary for the evaluation process.